And why the explanation is organisational, not technical

Enterprise AI is not failing because the models are weak.

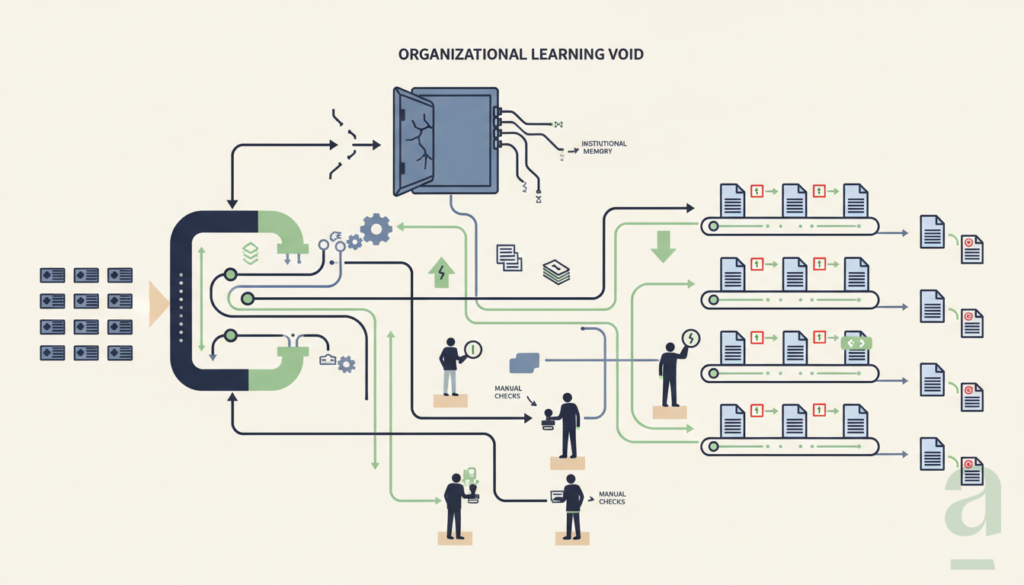

It is failing because most organisations are not designed to absorb, validate, and compound intelligence.

That matters because the current discussion still treats AI as a technology adoption problem. The assumptions are familiar: the tools will improve, the data estate will mature, regulation will stabilise, scarce talent will gradually become available. Until then, progress will be uneven but understandable.

That explanation is incomplete.

Organisations with access to the same models, the same cloud infrastructure, and often the same implementation partners produce very different outcomes. Some move beyond pilots and begin embedding AI into daily operations. Many others do not. They run experiments, generate internal enthusiasm, build demos that look convincing, and still fail to create durable value.

The technological explanation does not account for that difference.

The real divide is organisational.

The pattern is visible everywhere

Most organisations are capable of launching an AI initiative.

They can identify a use case, secure a budget, assemble a team, connect a model to a process, and demonstrate promising results in a controlled setting. That is no longer exceptional. The threshold for starting has fallen dramatically.

What remains rare is turning that initial promise into an enduring operational capability.

This is where most initiatives stall. Not at the moment of experimentation, but at the moment they need to become part of the organisation’s normal functioning. The pilot works. The organisation does not change around it. The result is familiar: isolated deployments, local optimisation, no lasting shift in how decisions are made or how work improves over time.

That is why so much AI activity produces so little compounding value.

The problem is not adoption.

The problem is that scaling requires an organisation to learn, and most operating models do not know how to make that learning durable.

Pilots succeed where organisations can still compensate

A pilot is a protected environment.

The scope is narrow. The context is curated. Experienced people stay close to the output. When the system is wrong, they correct it. When it lacks nuance, they add it. When it misreads the situation, they compensate with experience and informal coordination.

This is one reason AI pilots often look more robust than they really are.

In the pilot phase, organisations borrow intelligence from the people around the system. The machine appears more capable because the surrounding human structure quietly fills the gaps.

Once the initiative moves into broader production, that compensation becomes harder to sustain. The volume increases. The number of edge cases rises. Context changes faster. Ownership becomes diffuse. At that point, the absence of organisational learning becomes visible.

The system may continue producing outputs, but the organisation does not become more capable.

It repeats.

What is missing is not only memory in the model

Much of the current conversation about memory focuses on technical architecture. Retrieval layers, vector stores, agent memory, context windows, orchestration frameworks. Those are relevant, but they are not the main issue.

The deeper issue is organisational memory.

Most AI deployments are not connected to a disciplined mechanism for capturing what happened, why it happened, how the outcome was judged, what proved wrong, and what should change next time. In other words: the organisation has no designed way to turn experience into reusable intelligence.

That makes scaling impossible.

Real work is cumulative. Decisions depend on prior decisions. Exceptions recur. Judgement improves through feedback. Quality increases when errors are recognised, interpreted, and incorporated into the next cycle. If that loop is absent, neither the people nor the systems around them truly improve.

An AI capability without designed memory remains permanently dependent on local heroics.

It can perform, but it does not compound.

A concrete example

Take a manufacturing environment where AI is used to support quality inspection.

A pilot may show very promising results. The model flags anomalies, operators review them, false positives are manageable, and early defect detection looks valuable. Leadership concludes that the use case is proven.

Then the system is rolled out more broadly.

Different product variants begin to appear. Production conditions shift. Certain defect types recur but are interpreted differently across teams. Experienced supervisors override the system in recurring situations, but those corrections are not systematically captured. No one owns the discipline of translating those interventions into shared operational learning. The model continues to classify. People continue to correct. The organisation learns informally, but the system does not improve in a structured way.

After some time, trust erodes. Local teams work around the system. The initiative survives on paper but weakens in practice.

The failure is then described as an AI problem.

Usually it is not.

The model was part of the story. The real absence was architectural: no designed process to capture overrides, validate reasoning, assign ownership, and feed the learning back into operations.

The same pattern appears in claims handling, service management, procurement, sales support, legal review, and knowledge work more broadly. The use case changes. The structural failure does not.

Why large organisations struggle disproportionately

Large enterprises are often highly active in AI. They invest heavily, run multiple pilots, establish governance boards, appoint responsible leaders, and work with top-tier vendors. From the outside, they appear well positioned.

Yet many still struggle to convert activity into enterprise-wide capability.

That is not a contradiction. It is a consequence of their operating design.

Large organisations are usually built to optimise control, consistency, specialisation, and risk management. Those design choices made sense in a world where work was more stable and the main challenge was efficient execution. AI introduces a different requirement. It needs organisations that can integrate distributed learning, clarify decision ownership, redesign workflows, and adapt based on feedback without collapsing into fragmentation.

Many enterprises have the budget for AI, but not the organisational metabolism for it.

They can fund experiments. They struggle to redesign the routines, governance, and accountability structures that would allow intelligence to accumulate across the business.

That is why the gap between pilot success and production value is so persistent.

Why the productivity framing leads organisations astray

A second structural error sits underneath many AI programmes.

They begin with the question: how can we make people faster?

That framing almost always produces narrow productivity use cases. Drafting support. Meeting summaries. ticket routing. Search assistance. Task acceleration. These can be useful. Some create genuine local value.

But as a dominant framing, productivity is too small.

It measures throughput more easily than capability. It tracks activity more naturally than judgement. It encourages organisations to ask how work can be accelerated before asking whether work should be redesigned.

This matters because enterprise value rarely comes from speed alone. It comes from better decisions, fewer repeated errors, stronger coordination, more reliable knowledge, and the ability to improve outcomes over time. Those are not merely productivity gains. They are design gains.

If AI is introduced into a weak information environment, it tends to amplify the weakness. It produces more output inside structures that still do not know what counts as valid knowledge, who decides, which version matters, or how learning is retained.

That is why many organisations become more active without becoming more effective.

Information architecture and organisational architecture cannot be separated

This is the part many AI programmes still miss.

AI is not simply another software layer to be integrated into an existing stack. It changes the relationship between information, decisions, and action. That means the question is not only which model to use, or which platform to standardise on, or which policies to write.

The question is how the organisation produces, validates, routes, and reuses intelligence.

That is not an IT concern alone. It is not a data concern alone either. It is a management concern.

Who owns a correction once it has been made?

Where is that judgement captured?

How does it become reusable by others?

How are conflicting interpretations resolved?

Which feedback loops are formalised, and which remain invisible?

What happens when the system performs adequately in one team but creates downstream ambiguity elsewhere?

These are operational questions. They determine whether intelligence remains episodic or becomes structural.

An organisation that cannot answer them will keep running pilots.

An organisation that can answer them begins building capability.

The discipline that is missing

The failure of AI initiatives is often described as if organisations are waiting for the technology to mature.

In many cases, the technology is already sufficient.

What is missing is the discipline to treat information as something that must be designed as deliberately as finance, operations, or quality. Not as exhaust. Not as an accidental by-product of work. Not as something that will sort itself out once enough tools are deployed.

AI makes that omission impossible to ignore.

Adaptive systems placed inside organisations with weak information discipline do not produce transformation. They produce motion, experimentation, and often noise. The activity can be impressive. The value remains fragile.

That is why the path forward is not simply better models, larger budgets, or more enthusiastic adoption.

It is better design.

Information architecture and organisational architecture must be designed together. Feedback loops must be explicit. Decision ownership must be clear. Learning must be captured as an operational function, not left to informal human compensation around the edges of a pilot.

Memory is not a feature.

It is an organisational responsibility.

Without that responsibility, organisations do not scale AI. They repeat pilots and call it progress.