Part 3 of AI Transformation: From Ambition to Impact

AI initiatives create value at scale when they operate inside the analytical and operational context of the business. Outside of it, they produce demos.

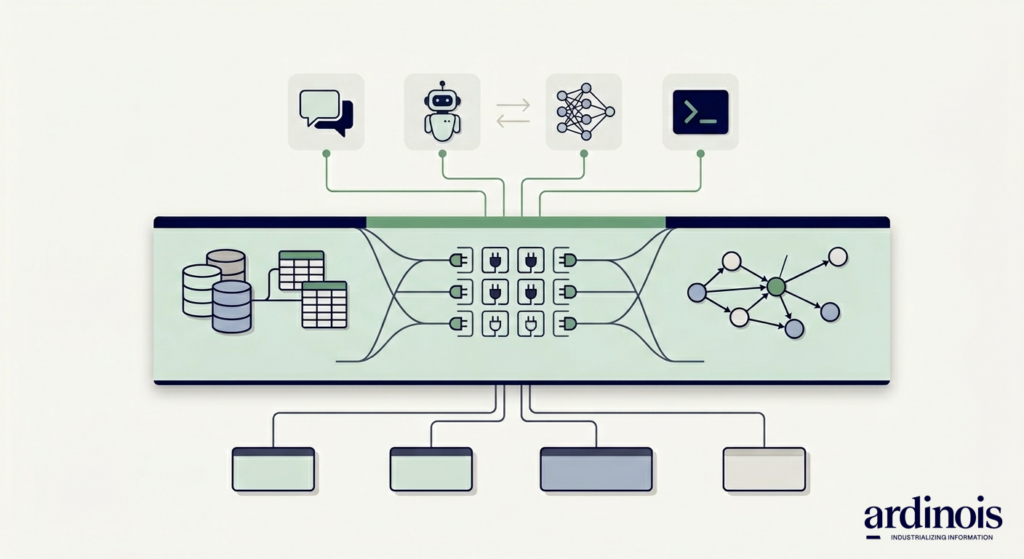

That context is not abstract. It sits in data warehouses, transactional systems, planning tools, CRM, ERP, content platforms, and the tacit knowledge of the people who work with them. For AI to automate a process or support a decision, it has to reach into these systems, understand what it finds there, and act in a way the rest of the organization can trust.

This is the data and integration layer. It is the most underinvested part of most AI programs, and the most decisive.

What sits in this layer

Three components carry most of the weight.

Data landscape. The tables, models, and flows that describe the business. Not only the data warehouse. The operational data in source systems, the lineage that connects them, and the quality signals that tell you which fields to trust. Without this, an AI system cannot tell the product master apart from a stale export.

API and tool access. For agents to take action, they need programmable entry points into the systems they operate against. This is where protocols like MCP and structured tool interfaces matter. The question is not whether an agent can call an API. It is whether the catalog of APIs available to it is curated, versioned, documented, and safe to invoke at scale.

Semantics. This is the component most organizations skip. A semantic layer captures the business ontology: what a customer is, how an order relates to a shipment, what “active” means in three different systems, which KPI is the real one. It maps these concepts to the underlying data and operational systems. Done well, it also captures the tribal knowledge that a new analyst picks up in the first six months on the job. Which table has the clean version. Which field is deprecated. Which exception nobody documented.

A semantic layer is how you give an AI the context that a senior colleague holds in their head.

Why this is the structural bet right now

Models are changing quickly. Agent frameworks are changing quickly. The application layer will continue to churn for several years.

The integration layer does not churn in the same way. A well-organized data landscape, a clean API surface, and a coherent semantic model will serve whichever model and whichever agent framework you run on top of them. The better this foundation, the more value each new generation of capability produces, with less effort.

The inverse is also true. Weak foundations cap the value of even the strongest models. Every initiative pays a tax in bespoke data preparation, custom system integration, and context engineering. That tax does not go away with a better model. It scales with the number of use cases.

Where the value shows up

Four effects, each visible within a year of treating this layer as infrastructure.

Speed. Time to value on new use cases drops. Each initiative starts from a foundation rather than from scratch. The first use case in a domain is expensive. The tenth is cheap.

Flexibility. Models and agent frameworks change. The integration layer does not. When a better model arrives, or a new orchestration pattern emerges, you swap it in on top of a stable foundation instead of rebuilding context.

Cross value chain leverage. Use cases built on shared data, APIs, and semantics start benefiting each other. A forecasting model and a pricing agent can reason about the same product, the same customer segment, the same promotion mechanics. The sum is larger than the parts.

Compounding returns. Every improvement in model capability lands in an environment that already understands the business. More capable models produce more value against the same foundation. Weaker foundations cap the value of any model you put on top.

The output of all four is AI that operates with the context the rest of the organization takes for granted.

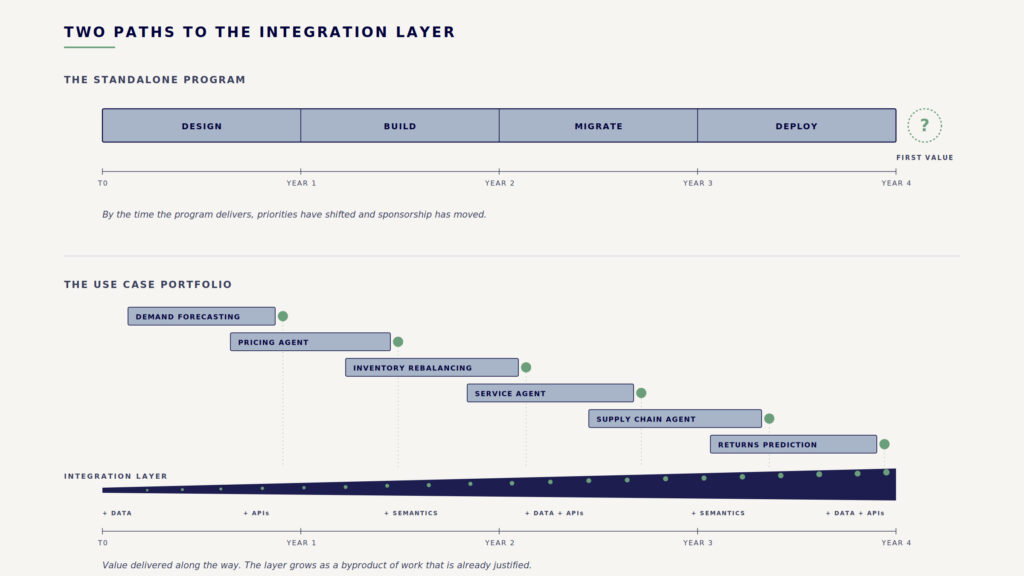

How not to build it

The instinct is to run a standalone program to rebuild the data and integration layer first, then deliver use cases on top. This rarely works. It is slow, expensive, and disconnected from the problems the business actually cares about. By the time the program delivers, priorities have shifted and sponsorship has moved on.

How to build it

Two things done together.

First, define the target architecture. Not a detailed blueprint of every system. A clear view of the data domains, the access patterns, the semantic model, and the standards new integrations follow. This gives every project something to build toward.

Second, build a portfolio of use cases that progressively fills in that architecture. Each use case extends data coverage, adds to the semantic model, hardens an API, or codifies a piece of tribal knowledge. Value is delivered along the way. The layer grows as a byproduct of work that is already justified.

This is the most important structural investment an AI program can make right now. Regardless of model. Regardless of vendor. Regardless of where agent tooling lands in eighteen months. The organizations that treat the integration layer as core infrastructure, and build it through their use case portfolio rather than around it, will compound value faster than the ones that do not.