The enterprise data landscape is evolving rapidly. Many traditional architectures are struggling to keep up, facing challenges with data silos, vendor dependencies, and technical debt that slows innovation. Meanwhile, realizing the full potential of AI-driven insights remains difficult for many businesses.

It’s worth considering a fresh approach — one that’s not just designed to serve AI, but is increasingly architected, managed, and optimized by AI itself. We are moving into an era where AI agents don’t just consume data; they actively help construct, govern, and evolve the data landscape.

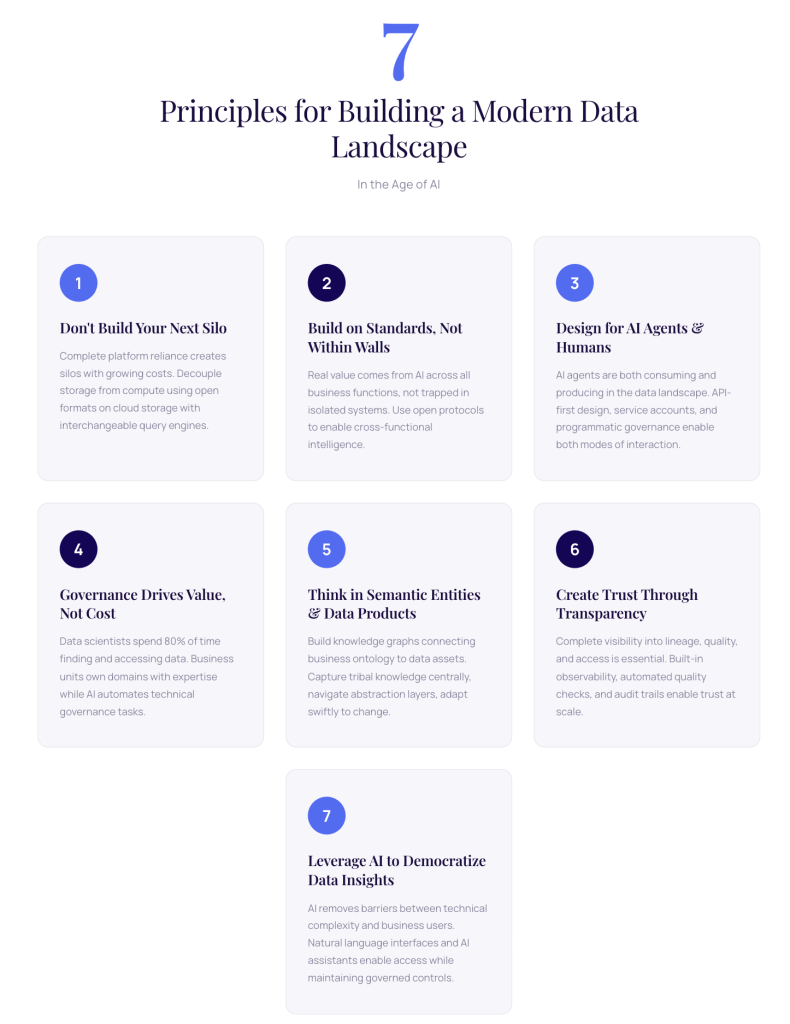

To move from a bottlenecked, manual, and siloed system to an adaptive, AI-augmented architecture requires a shift in perspective. The following are seven principles I use when considering choices in building out a data landscape — principles that move beyond simply integrating tools and address the foundation of data strategy.

Seven Principles for an AI-Augmented Data Architecture

1. Don’t Build Your Next Silo: Data Sovereignty & Control

The Principle: Organizations must maintain full ownership and control of their data, actively pursuing architectures that mitigate lock-in and vendor capture.

There’s growing recognition that surrendering core data assets to proprietary platforms can limit strategic flexibility. Solutions like Snowflake and Databricks offer genuine value and capabilities, but the decision to adopt them should be made with full awareness of the long-term trade-offs. Complete reliance on a single platform often creates another form of silo — one with substantial and growing costs. The deeper the dependency, the harder it becomes to adopt new technologies or change strategies, potentially leading to expensive and complex migration projects.

Data sovereignty means retaining the power to decide where your data lives, who can access it, and how it’s used. This isn’t a criticism of specific platforms; it’s about making conscious, informed decisions that ensure you have the option to change.

In Practice: Decouple storage from compute as much as possible. Store your data cost-effectively on cloud object storage (S3, Azure Blob, GCS) using open formats like Iceberg, Delta Lake, or Parquet. Use separate, interchangeable query engines (Trino, Spark, DuckDB) that provide SQL access to that data. This means you can swap compute layers based on performance, cost, or feature needs without migrating your data.

2. Open & Hyper-Agnostic: Build on Standards, Not Within Walls

The Principle: Aim for seamless integration across formats, systems, and clouds by building on open standards rather than proprietary connectors.

Today’s data landscape typically spans multiple clouds, numerous tools, and countless data formats. An architecture that can work with this diversity — without becoming a complex mess of custom integration code — offers significant advantages.

Here’s an important consideration: real value comes from running AI and analytics across combinations of business processes and systems, not within isolated silos. Most enterprise software vendors are now bringing AI features directly into their platforms — and there is value in those capabilities. But the scope of insight these embedded AI features can provide is limited by the boundaries of that single system.

AI becomes a more significant competitive factor when you can combine insights and operate across all business functions — sales, operations, finance, supply chain, customer service. This requires breaking down the walls between domain-focused solutions. Open and industry-standard protocols and table formats create the interoperability needed to achieve this. A hyper-agnostic approach reduces dependence on any single vendor’s roadmap and enables the cross-functional intelligence that can drive business impact.

In Practice: Where feasible, prioritize tools that natively support open protocols and industry standards. Be cautious of platforms that require proprietary connectors for basic functionality. Design your data architecture as loosely coupled services that communicate only through well-documented, standard interfaces.

3. Accessible to AI Agents & Humans: Design for Both

The Principle: The data landscape should be built by and for both AI agents and humans, with agents handling complexity and scale while humans provide governance and direction.

The evolution of data consumption has changed significantly. Traditionally, data landscapes were built primarily for dashboards and reporting — humans looking at charts and tables. Then we added support for ML and data science workloads. Now we’re seeing increased AI agent consumption: agents supporting operational processes, powering conversational analytics, making real-time decisions, and increasingly, building and modifying the data infrastructure itself.

AI agents are no longer just consuming data; they are helping build pipelines, optimize schemas, generate transformations, and even design data products. They excel at scale, pattern recognition, and routine analysis. Humans provide the strategic thinking, ethical oversight, and creative problem-solving.

This dual role — AI as both consumer and builder — requires a fundamentally different architecture. We need to design our data landscape with both modalities in mind: accessibility patterns that work for APIs and humans, authentication that supports both service accounts and user accounts, security models that can govern programmatic and interactive access, and access controls that scale to thousands of AI agents while remaining auditable.

This dual role requires a data architecture that is fundamentally machine-readable and programmable. API-first design, robust metadata, and semantic layers that AI can interpret and act upon become essential.

In Practice: Implement rich metadata management that includes semantic descriptions AI can both understand and generate. Build API-first data products that work well for both human developers and autonomous agents. Develop governance frameworks that enable AI agents to propose schema changes, transformation logic, or new data products for human review and approval.

4. Governance is a Value-Driving Business Function — Not a Tech Cost Center

The Principle: Data ownership and governance work better when moved from central IT bottlenecks into the business units, with AI agents helping to reduce the manual workload.

Governance is a key value driver, not a technical cost center. In my experience, data scientists spend roughly 80% of their time finding the right quality data sources and getting access to them — the same holds true for analytics and data engineering workloads. When governance works well, this time decreases significantly. When it doesn’t, it’s a primary bottleneck that reduces productivity and slows innovation.

Traditional centralized governance often creates these bottlenecks. A more federated approach — where business units own their data domains, define their quality standards, and manage access — empowers domain experts who understand the data best. Establishing data quality, managing access, and defining what data means requires deep business expertise. The people most invested in getting this right are the business and domain owners themselves — they live with the consequences of poor data quality every day. IT teams, no matter how skilled, simply can’t have this depth of domain knowledge across all business functions.

However, for this to succeed, we need technology and tools that remove the technical burden. Through AI and automation, we can simplify governance so that business owners can focus on higher-level ownership and governance activities — defining what “good” looks like, making access decisions, resolving ambiguities — rather than wrestling with technical implementation details.

AI agents are the force multiplier here. They can automatically monitor quality, flag anomalies, suggest access policies, implement routine governance tasks, and generate documentation. This shifts the human role from operational execution to strategic oversight and decision-making.

In Practice: Explore data mesh principles where domain teams take clear ownership of their data products. Utilize AI-powered tools that automate quality monitoring, lineage tracking, and compliance checks. Establish clear accountability where business owners make governance decisions with appropriate technical support.

5. Business Semantics at the Core: Think in Linked Semantic Entities and Data Products, Not Pipelines

The Principle: Analytics is far more effective when grounded in a connected graph of governed data products rather than fragmented point-to-point pipelines.

Shift your thinking from building data pipelines to creating data products. A data product is a well-defined, discoverable, and reusable asset with clear ownership, documented meaning, and built-in quality expectations.

The key is connecting these through a knowledge graph approach that models the semantics of your business and links them to the underlying data landscape. This works in three connected layers:

At the top sits your business ontology — showing how your business is structured, what entities exist, how they relate, and what the governing processes are. This captures “tribal knowledge” centrally and provides reasoning pathways for both humans and AI agents.

In the middle, you have governed data products containing information about linked data assets, transformations, ownership, and quality scoring.

At the foundation, you maintain up-to-date metadata about your enterprise data assets, AI/ML models, and lineage across all modalities and providers.

This layered approach allows you to model business semantics, capture knowledge centrally, and connect it to the data landscape all the way through. You can dramatically reduce the number of data products needed while identifying gaps easily. When business or technology needs change, you can swiftly adapt. The semantic layer captures business logic once, ensuring consistency across all downstream uses, moving you from building custom pipelines for every use case to creating reusable, composable products.

In Practice: Define data products with clear interfaces, service levels, and ownership. Build a knowledge graph that captures relationships between business entities. Implement a semantic layer that translates business questions into consistent data queries, making data usage far easier for non-technical teams and AI agents alike.

6. Quality, Lineage, and Transparency: Trust Through Visibility

The Principle: Trust is built through end-to-end lineage, quality metrics, auditability, and secure access — all as fundamental components of the stack.

It is difficult to trust what you cannot see. Modern data platforms must have complete visibility into data lineage (origins and transformations), automated quality metrics, comprehensive audit trails (access tracking), and secure access controls.

These capabilities must be built-in features, not afterthoughts. Every data product should come with built-in observability, quality indicators, and clear documentation of its provenance, enabling both human auditors and AI monitoring agents.

In Practice: Implement column-level lineage tracking across all transformations. Build automated data quality frameworks that detect and alert on issues early. Create granular audit logs for all data access and modifications. Explore attribute-based access control to scale security dynamically with organizational complexity.

7. Responsible Democratization: AI as the Translator

The Principle: Minimize technical or role barriers so people throughout the organization can discover, access, and act on insights responsibly.

Data becomes more valuable when more people can use it effectively. The goal is responsible democratization: making data discoverable and accessible while maintaining necessary governance.

Here, AI acts as a translator between technical complexity and business users. Natural language interfaces, AI-powered assistants, and self-service analytics enable business users to explore data without writing SQL or Python. AI agents can translate complex data product outputs into natural language summaries or embed them directly into operational workflows. Governed access ensures people only see data they are authorized to use.

In Practice: Implement a powerful data catalog with effective search. Provide multiple interfaces for different skill levels — from no-code BI tools to notebooks. Utilize AI assistants to help users formulate queries, interpret results, and receive personalized insights directly within their day-to-day business applications.

Bringing It All Together

These seven principles are interconnected. Data sovereignty enables openness. Business semantics support AI accessibility. Federated governance benefits from transparency. Democratization depends on quality.

The modern data landscape isn’t just about adopting new technologies; it’s about reconsidering how organizations manage data when AI can be both the architect and the analyst.

By working with principles like these, you can build toward an architecture where humans and AI collaborate at every layer: AI handles the heavy lifting of construction, optimization, and routine analysis, while humans provide the strategic direction, governance, and ethical oversight.

This approach shifts the data team’s role from constantly building and manually maintaining infrastructure to orchestrating an autonomous data ecosystem — providing direction and oversight rather than doing all the work themselves.

There’s no single right answer for every organization, but hopefully these principles provide a useful starting point for the conversation.